Caylent Services

Cloud Native App Dev

Deliver high-quality, scalable, cloud native, and user-friendly applications that allow you to focus on your business needs and deliver value to your end users faster.

Supercharge AWS Lambda cold start times by up to 90% by leveraging AWS Lambda SnapStart and Firecracker, helping you minimize latency without any additional caching costs.

AWS Lambda is a powerful serverless compute service provided by Amazon Web Services (AWS) that allows developers to run code without provisioning or managing servers. With Lambda, developers can upload code organized into functions, and AWS will automatically scale the resources needed in response to incoming requests as well as provide automatic fault tolerance.

Lambda functions can be triggered by a wide range of events, including changes to data in Amazon S3, updates to an Amazon DynamoDB table, or changes to an Amazon Simple Notification Service (SNS) topic.

Lambda supports a variety of programming languages, including Node.js, Python, Java, Ruby, Go, and .NET. Lambda functions have a pay-as-you-go model and are billed based on usage ($/invocation) and duration of computation ($/hour). Lambda is the powerful tool for building serverless applications, event-driven workflows, and data processing pipelines, among a seemingly unlimited number of other use cases.

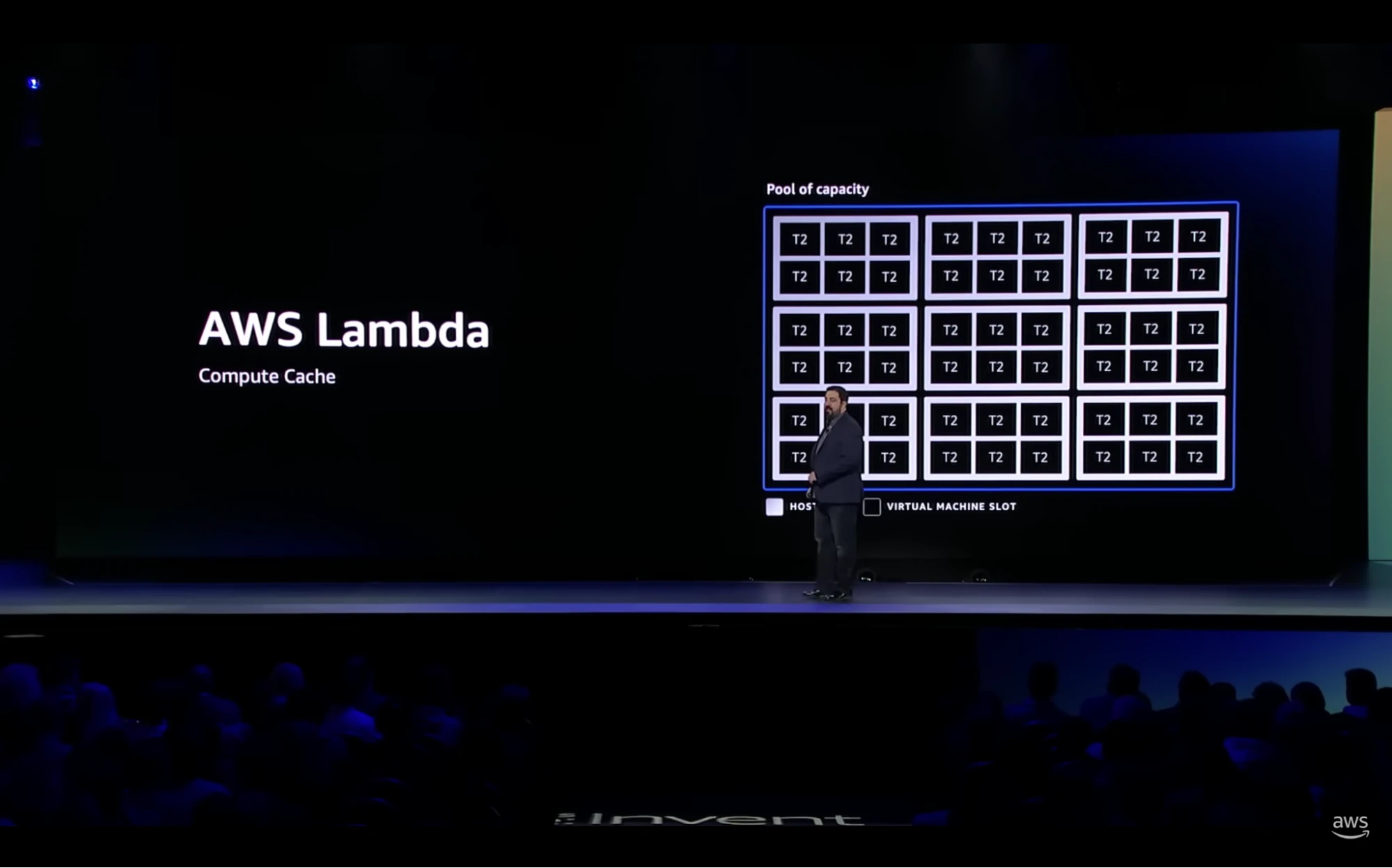

You can think of Lambda as a big cache, where the items that are being cached are not units of datum, but instead units of compute. To enhance efficiency and security, AWS divides a huge amount of these compute units into a large number of “slots”. Each slot is a virtual machine on standby, ready to run a customer’s function on demand and with high concurrency. We’ll learn more about the underlying concepts, such as micro VMs and Firecracker, that powers Lambda functions later on in this blog.

When a customer invokes a Lambda function, the request is routed and matched to a “slot” that is powered by a virtual machine that can handle that particular function. This is considered the happy path - a cache hit. Now, what happens when the requested function is not loaded in the cache? You guessed it - a cache miss. When a cache miss occurs, Lambda will replace a slot and load the requested function, which comes with a latency cost.

The virtual machines that power Lambda spin up quickly but not quickly enough for a function call, so AWS Lambda leaves part of its compute cache uninitialized. This allows Lambda to reduce launch latency but results in fewer Lambda functions being loaded into memory, resulting in more cache misses. Caches are great for decreasing the average latency when data (or functions) are loaded into memory. However, if a function is not loaded into memory, it takes much longer to execute, which is a form of tail latency called a “cold start.”

When it comes to AWS Lambda, cold starts happen when a function hasn’t been invoked in a while or when load becomes high enough that cached functions are replaced frequently. These types of system slowdowns, especially during peak traffic times, are exactly what we don’t want to happen. One solution to this problem is to increase the size of the cache, but that comes with the drawback of increased cost, resulting in a tug-of-war between sacrificing cost for efficiency and vice versa.

It became clear that AWS needed to solve this tug-of-war problem and provide the most efficient solution for their Lambda customers at the best possible price. As a result, AWS rolled out Firecracker - a purpose-built virtualization technology that provides fast virtualization of serverless computing and improves the efficiency of Lambda’s compute cache. Firecracker spins up virtual machines (called microVMs) that are significantly smaller than traditional VMs. By leveraging microVMs, AWS is able to increase the size of their compute cache without the need to compromise on cost. Firecracker allows Lambda to store more functions within its cache, while also enabling Lambda to shut down and restart cache slots in a fraction of the time it takes to shut down and spin up a new VM.

The introduction of Firecracker delivered a 50% improvement in cold start latency compared to using traditional VMs. AWS could have stopped there, but their obsession with customer satisfaction took it one step further with the innovation of AWS Lambda Snapstart.

Before Lambda SnapStart, users didn’t have many ways of improving the execution time of their Lambda functions. One of the few options users had was to change the tiered compilation to 1 for the Java JIT. Setting the tiered compilation to 1 ensures the JVM only uses the C1 compiler to optimize code without generating any profiling data or using the C2 compiler. The C1 compiler is optimized for fast initialization times whereas the C2 compiler, although uses more memory, is optimized for the best overall performance. Although this was a useful enhancement for Java-based Lambda functions, AWS wanted to take it a step further.

Spinning up a new VM includes several startup phases. One of these phases, the initialization phase, takes the longest to complete and can vary based on a number of factors, including the programming language of the Lambda function being loaded. According to AWS’s SVP of Utility Computing, Peter DeSantis, the initialization time for Java, which is the language with the longest cold start times, depends on three main factors: startup time of the JVM, decompression, and loading of the class code, and execution of the initialization code of the classes. After the first initialization of the microVM, AWS Lambda SnapStart takes a snapshot of the post-initialization state and uses this new function-specific snapshot to spin up a Lambda function on a cache miss rather than the common snapshot. This enables bypassing the initialization phase on every start up, eradicating the main cause of latency during a cold start.

It’s worth noting that Lambda SnapStart is not enabled by default. Lambda functions with supported runtimes need to be manually configured to use SnapStart. This configuration resides in the basic settings of the Lambda function.

With the introduction of Lambda Snapstart, AWS was able to reduce Lambda tail latency to the degree that cache misses are now indistinguishable from cache hits, and this is achieved with no additional cost. The performance of AWS Lambda has been supercharged with a 90% decrease in cold start times regardless of runtime environment.

AWS conducted a performance test on a Java Lambda without SnapStart, with SnapStart, and with SnapStart using JVM tiered compilation, measured in milliseconds. In short, the results are as follows:

| Optimization | P0 | P99 | Improvement in % |

|---|---|---|---|

| Without SnapStart | 4913 | 5994 | |

| SnapStart Without Optimization | 898 | 1405 | ~75-80% |

| SnapStart with Tiered Compilation | 753 | 1124 | ~15-20% |

Customers can now depend on consistent, fast performance with AWS Lambda during peak load times and put the worry of cold start latency behind them.

From migrating and modernizing your infrastructure, building cloud native applications & leveraging data for insights, to implementing DevOps practices within your organization, Caylent can help set you up for innovation on the AWS Cloud. Get in touch with our team to discuss how we can help you achieve your goals.

Scott Erdmann is a Cloud Architect at Caylent and ensures customers are building applications with AWS the correct way using best practices. Scott graduated from the University of Minnesota, Twin Cities, and is always curious about new & emerging technologies. Scott grew up in the midwest and enjoys the nice summer months in Minnesota but also loves snowboarding during the winter.

View Scott's articles

Caylent Services

Deliver high-quality, scalable, cloud native, and user-friendly applications that allow you to focus on your business needs and deliver value to your end users faster.

Caylent Services

From rehosting to replatforming to rearchitecting, Caylent will help you leverage AWS to its fullest potential to meet your business objectives.

Caylent Catalysts™

Design new cloud native applications by providing secure, reliable and scalable development foundation and pathway to a minimum viable product (MVP).

Get up to speed on all the GenAI, AI, and ML focused 300 and 400 level sessions from re:Invent 2023!

Get up to speed on all the storage focused 300 and 400 level sessions from re:Invent 2023!

Get up to speed on all the serverless focused 300 and 400 level sessions from re:Invent 2023!